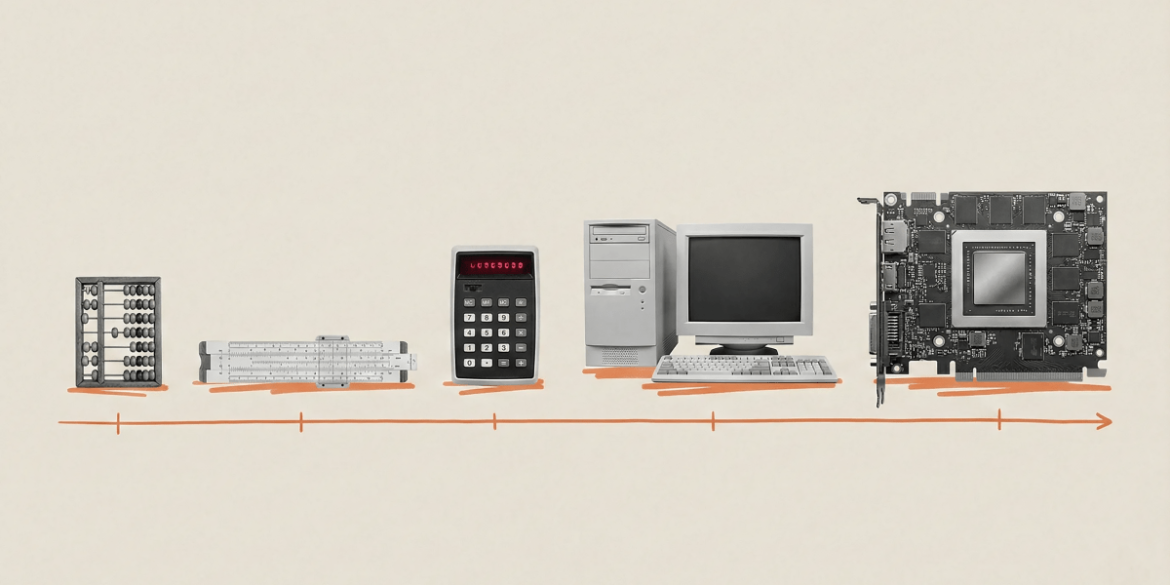

We have adapted to a linear environment. If you walk for an hour, you cover a specific distance. Walk for two hours and you cover twice that distance. This understanding served us well on the savannah. However, it dramatically fails when faced with AI and the fundamental exponential dynamics at its core.

From when I started working on AI in 2010 to the present, the quantity of training data utilized in cutting-edge AI models has surged by an astonishing 1 trillion times—from approximately 10¹⁴ flops (floating-point operations, the fundamental unit of computation) for earlier systems to more than 10²⁶ flops for the largest models today. This is a massive leap. Everything else in AI is derived from this reality.

Imagine AI training as a setting filled with individuals operating calculators. For years, enhancing computational power meant bringing in more people equipped with calculators to that space. Often, those workers sat idle, tapping their fingers on desks, waiting for the figures to be ready for their subsequent calculation. Each delay represented unutilized potential. Today’s revolution transcends merely having more and improved calculators (although it provides those); it’s fundamentally about ensuring that all those calculators operate continuously and that they function cohesively as one.

Three advancements are currently converging to facilitate this. First, the basic calculators have accelerated. Nvidia’s chips have achieved over a sevenfold enhancement in raw performance within just six years, from 312 teraflops in 2020 to 2,250 teraflops today. Our own Maia 200 chip, introduced this January, offers 30% greater performance per dollar than any other hardware in our lineup. Second, the data arrives more swiftly due to a technology called HBM, or high bandwidth memory, which stacks chips vertically like miniature skyscrapers; the latest iteration, HBM3, triples the bandwidth of its predecessor, supplying data to processors quickly enough to keep them occupied constantly. Third, the room of people with calculators has transformed into an office and subsequently a whole campus or city. Technologies like NVLink and InfiniBand connect hundreds of thousands of GPUs into warehouse-sized supercomputers that operate as single cognitive units. A few years ago, this was unthinkable.

These improvements amalgamate to provide significantly more computational power. Where training a language model required 167 minutes on eight GPUs in 2020, it now takes less than four minutes on comparable modern hardware. To frame this: Moore’s Law would forecast merely about a 5x enhancement during this timeframe. We observed a 50x increase. We have progressed from two GPUs training AlexNet, the image recognition model that initiated the contemporary surge in deep learning in 2012, to over 100,000 GPUs in today’s largest clusters, each one vastly more capable than its forerunners.

Additionally, there’s a revolution in software. Research from Epoch AI indicates that the computational resources necessary to achieve a constant performance level halved roughly every eight months, significantly quicker than the conventional 18-to-24-month doubling associated with Moore’s Law. The expenses of supporting some recent models have plummeted by a factor of up to 900 on an annual basis. AI is becoming profoundly cheaper to implement. The projections for the immediate future are equally astonishing. Consider that leading laboratories are expanding capacity at nearly 4x annually. Since 2020, the compute deployed for training frontier models has surged 5x per year. Global AI-relevant compute is predicted to reach 100 million H100 equivalents by 2027, a tenfold increase in three years. When you compile all this information, we anticipate roughly another 1,000x in effective compute by the end of 2028. It is conceivable that by 2030 we will add an extra 200 gigawatts of compute capacity each year—similar to the peak energy consumption of the UK, France, Germany, and Italy combined. What does all this amount to? I believe it will catalyze the shift from chatbots to nearly human-like agents—semiautonomous systems capable of generating code for days, executing weeks- and months-long projects, making calls, negotiating agreements, and managing logistics. Disregard basic assistants that respond to inquiries. Envision teams of AI workers that deliberate, collaborate, and implement. Currently, we are merely at the beginning of this transition, and the implications extend far beyond the technology sector. Every industry reliant on cognitive tasks will be revolutionized. The clear limitation here is energy. A single refrigerator-sized AI rack consumes 120 kilowatts, equivalent to 100 households. Yet this demand encounters another exponential challenge: Solar prices have decreased by nearly 100 times over 50 years; battery costs have fallen by 97% over three decades. A pathway to sustainable scaling is emerging.

Mustafa Suleyman is CEO of Microsoft AI.