In collaboration withMistral AI

In the initial phases of large language models (LLMs), we became familiar with significant 10x enhancements in reasoning and coding capabilities with each successive model version. Presently, these leaps have leveled off into gradual improvements. The exception is in specialized domain intelligence, where actual step-function advancements are still prevalent.

When a model integrates with an organization’s proprietary data and internal logic, it embeds the company’s history within its future processes. This synergy forms a cumulative advantage: a competitive barrier established on a model that comprehends the business deeply. This transcends fine-tuning; it embodies the institutionalization of expertise within an AI framework. This exemplifies the strength of customization.

Intelligence molded for context

Each industry functions within its distinct lexicon. In automotive engineering, the organization’s “language” revolves around tolerance stacks, validation cycles, and revision control. In capital markets, reasoning is governed by risk-weighted assets and liquidity buffers. In security operations, patterns are derived from the ambient noise of telemetry signals and identity discrepancies.

Customized models internalize the subtleties of the sector. They identify which variables influence a “go/no-go” decision and articulate thoughts in industry-specific terms.

Implementation of domain expertise

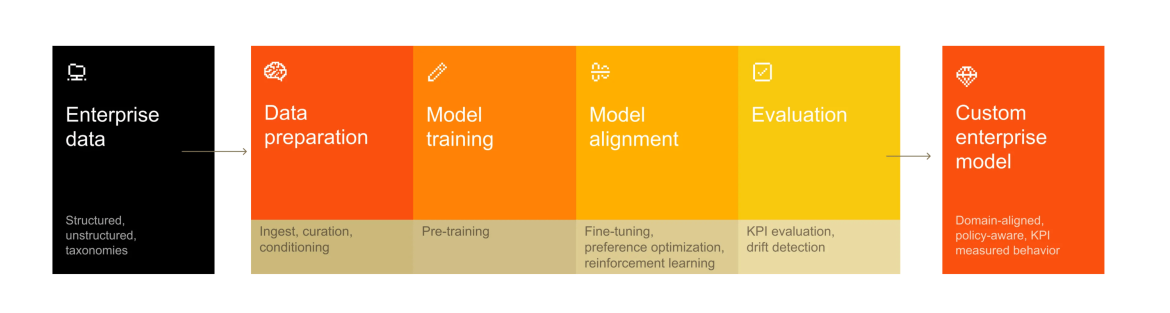

The shift from general AI to tailored AI focuses on one objective: embedding an organization’s distinctive logic directly into a model’s weights.

Mistral AI collaborates with organizations to infuse domain expertise into their training ecosystems. Several use cases exemplify customized applications in action:

Software development and large-scale assistance: A network hardware organization with proprietary languages and specialized codebases discovered that off-the-shelf models couldn’t grasp their internal architecture. By training a custom model on their specific development methodologies, they achieved a significant improvement in proficiency. Integrated into Mistral’s software development framework, this tailored model now supports the entire lifecycle—from managing legacy systems to autonomous code modernization through reinforcement learning. This transforms previously opaque, niche code into a domain where AI reliably operates at scale.

Automotive and the engineering copilot: A top automotive firm employs customization to transform crash test simulations. Previously, experts dedicated entire days to manually comparing digital simulations with physical results to identify discrepancies. By training a model on proprietary simulation data and internal evaluations, they automated this visual review, flagging deformations in real time. Beyond mere detection, the model now functions as a copilot, suggesting design modifications to align simulations more closely with real-world behavior and significantly accelerating the R&D process.

Public sector and sovereign AI: In Southeast Asia, a government agency is creating a sovereign AI framework to move beyond Western-centric models. By commissioning a foundational model tailored to regional languages, local idioms, and cultural contexts, they established a strategic infrastructure asset. This ensures sensitive data remains under local control while enhancing inclusive citizen services and regulatory support. Here, customization is crucial for deploying AI that is both technically adept and genuinely sovereign.

The strategy for strategic customization

Transitioning from a general AI tactic to a domain-specific benefit necessitates a fundamental rethinking of the model’s role within the organization. Success is defined by three shifts in organizational logic.

1. Consider AI as infrastructure, not a trial. Traditionally, organizations have treated model customization as a temporary experiment—a singular fine-tuning instance for a specialized application or a localized pilot project. While these tailored silos often yield encouraging outcomes, they are seldom designed for scalability. They result in fragile pipelines, improvised governance, and restricted portability. As the base models evolve, the adaptation efforts often need to be discarded and reconstructed entirely.

Conversely, a sustainable strategy perceives customization as foundational infrastructure. In this framework, adaptation processes are replicable, version-controlled, and engineered for production. Success is evaluated based on concrete business outcomes. By decoupling the customization rationale from the foundational model, companies ensure that their “digital nervous system” remains robust, even as the frontier of base models transforms.

2. Maintain control over your data and models. As AI transitions from peripheral activities to core operations, the issue of control emerges as critical. Dependence on a singular cloud provider or vendor for model alignment creates a troubling power imbalance concerning data residency, pricing, and architectural updates.

Organizations that retain authority over their training pipelines and deployment environments safeguard their strategic autonomy. By adapting models within controlled settings, organizations can enforce their own data residency norms and dictate their own update schedules. This method shifts AI from a consumed service to a governed asset, minimizing structural dependence and enabling cost and energy optimizations aligned with internal objectives rather than vendor timelines.

3. Design for ongoing adaptation. The enterprise landscape is never static: regulations change, taxonomies develop, and market conditions vary. A frequently observed shortcoming is treating a tailored model as a completed artifact. In truth, a domain-aligned model is a dynamic asset that can experience model decay if neglected.

Designing for continual adaptation necessitates a systematic approach to ModelOps. This encompasses automated drift detection, event-driven retraining, and incremental updates. By establishing the capacity for perpetual recalibration, the organization ensures that its AI not only mirrors its history but also evolves in tandem with its future. This stage marks the point where the competitive advantage starts to accumulate: the model’s utility expands as it internalizes the organization’s continuous response to changes.

Control is the new leverage

We have entered a phase where generic intelligence is a commodity, but contextual intelligence is scarce. While raw model capability has become a baseline requirement, the true differentiator lies in alignment—AI fine-tuned to an organization’s specific data, mandates, and decision-making processes.

In the coming decade, the most valuable AI will not be the one that knows everything about the world; it will be the one that understands everything about you. The organizations that possess the model weights of that intelligence will dominate the market.

This content was developed by Mistral AI. It was not authored by the editorial team at MIT Technology Review.