“Reducing the bar for scrutiny”

Across 1,372 participants and more than 9,500 trials, the team found people accepted incorrect AI reasoning in 73.2 percent of cases and overturned it just 19.7 percent of the time. The authors say this shows people readily fold AI-generated outputs into their decisions with little resistance or skepticism. More generally, they argue that smooth, confident responses are often treated as epistemic authorities, which lowers the bar for critical inspection and dulls the metacognitive signals that would normally trigger more careful deliberation.

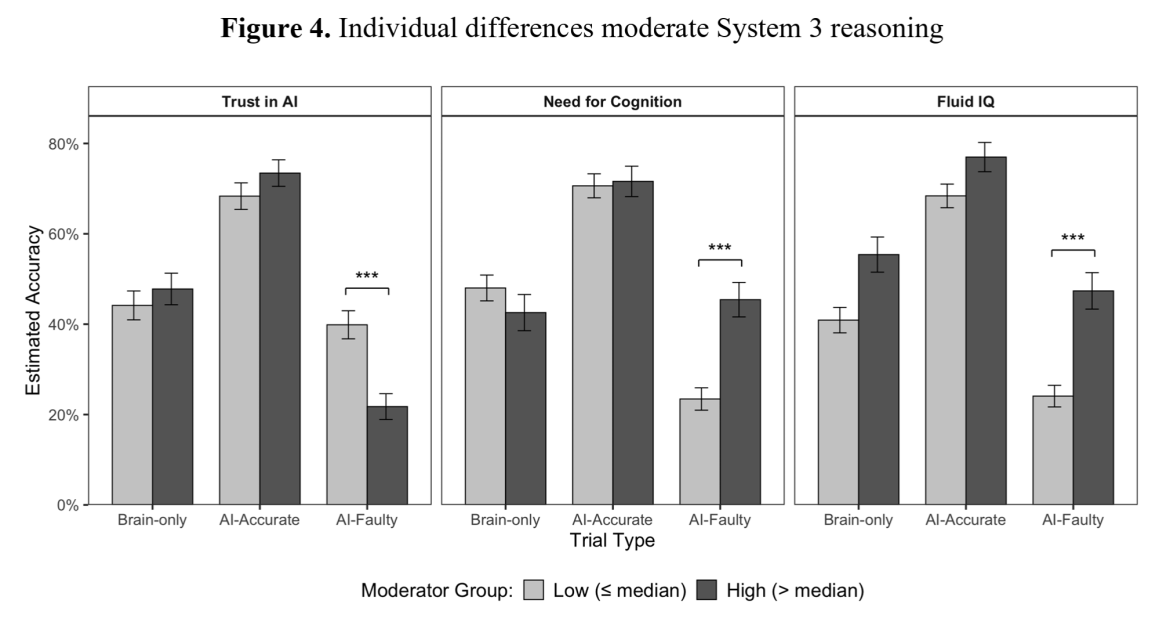

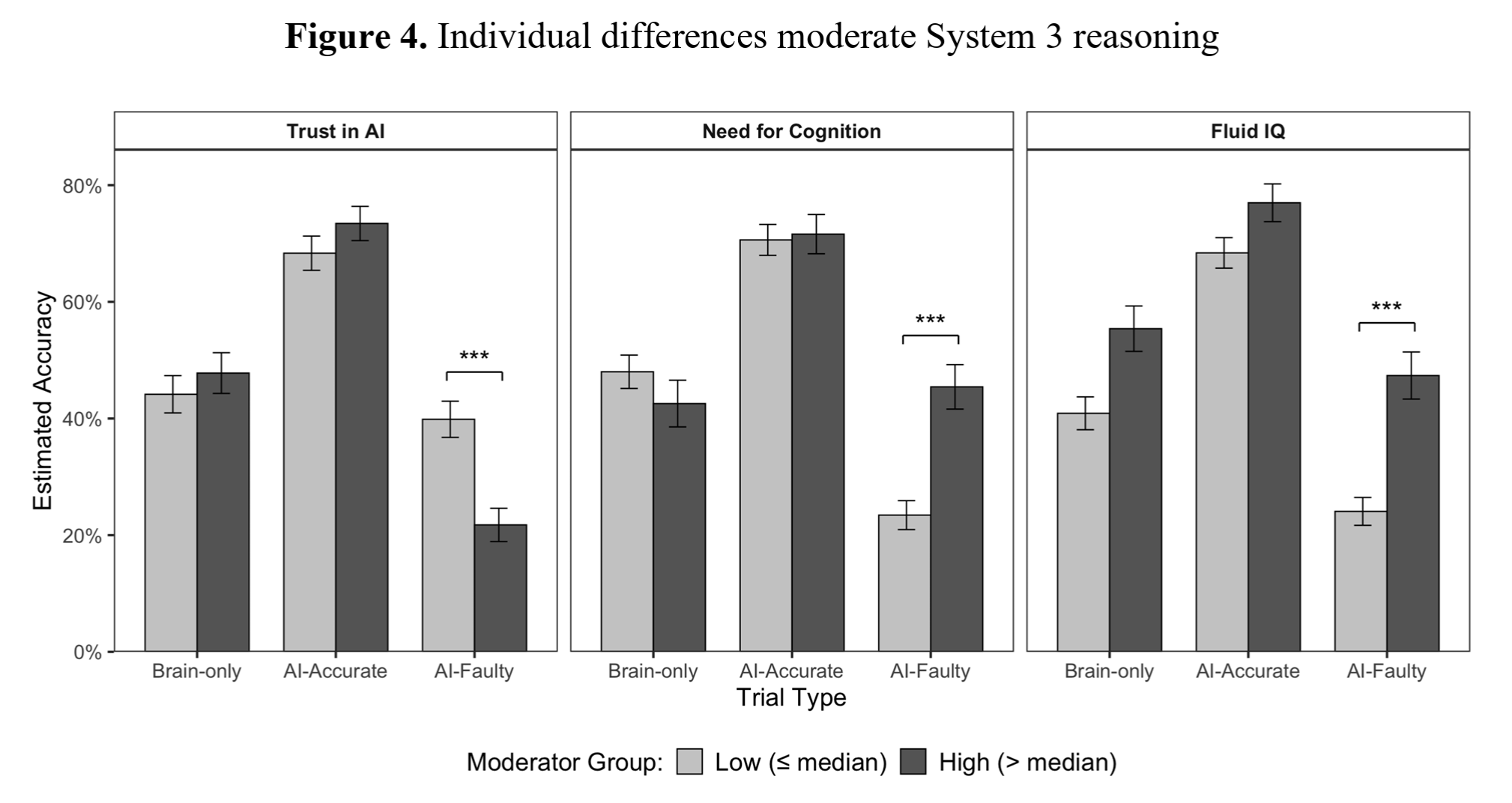

The effects varied across individuals. Those who scored well on independent assessments of fluid reasoning were less likely to lean on the AI and more prone to reject incorrect AI output. By contrast, people who rated AI as authoritative in surveys were considerably more likely to be led astray by erroneous AI answers.

The researchers note that “cognitive surrender is not inherently irrational.” While depending on an LLM that errs half the time (as in these tests) carries clear costs, a statistically superior system could still outperform humans in areas like probabilistic judgement, risk evaluation, or analysis of large datasets, they suggest.

They add that as reliance grows, performance follows AI quality — improving when the system is accurate and declining when it is not — a pattern that highlights both the potential of superintelligent systems and a built-in weakness of cognitive surrender.

Put differently, outsourcing your reasoning to an AI ties your judgments to that system’s competence. As always: let the prompter beware.