This article first appeared in The Algorithm, our weekly newsletter focused on AI. To receive stories like this directly in your inbox, subscribe here.

In January, Jensen Huang of Nvidia, leading the globe’s most valuable enterprise, announced that we are entering the era of physical AI, whereby artificial intelligence will extend beyond just language and chatbots into machines with physical capabilities. (He made a similar statement the previous year, by the way.)

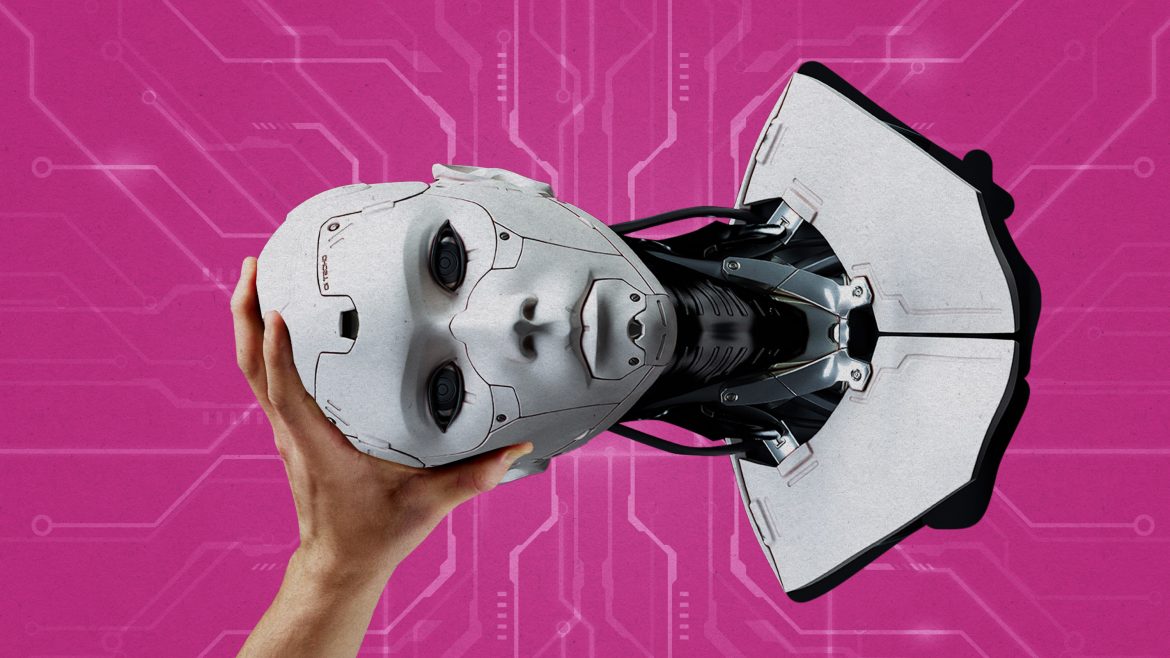

The implication—driven by recent showcases of humanoid robots performing tasks like putting dishes away or car assembly—is that replicating human limbs through single-purpose robotic arms is an outdated method of automation. The new approach is to emulate human thought processes, learning, and adaptability during their tasks. However, the lack of clarity regarding the human effort needed to train and manage these robots leads to public misconceptions about the actual capabilities of robots and a failure to recognize the peculiar new types of work emerging around them.

Think about how, in this AI age, robots frequently learn from humans performing tasks. The mass generation of this data is now resulting in Black Mirror–like scenarios. For instance, a worker in Shanghai recently spent a week donning a virtual-reality headset and an exoskeleton, opening and closing the door of a microwave hundreds of times daily to train the adjacent robot, as reported by Rest of World . In North America, the robotics firm Figure seems to be pursuing a similar strategy: It announced in September that it would collaborate with the investment company Brookfield, which oversees 100,000 residential units, to gather “massive amounts” of real-world data “across various household environments.” (Figure did not respond to inquiries about this initiative.)

Just as our words became training data for large language models, our actions are now set to take the same trajectory. However, this future might leave humans in a worse predicament, and it’s already starting. Roboticist Aaron Prather informed me about recent projects with a delivery firm that had its employees wear movement-tracking sensors while carrying boxes; the data acquired will be utilized to instruct robots. The quest to develop humanoids will likely necessitate manual laborers serving as data providers on a grand scale. “It’s going to be strange,” Prather says. “No doubts about it.”

Or take tele-operation into account. While the ultimate goal in robotics is a machine capable of autonomously completing a task, robotics companies hire individuals to remotely control their robots. Neo, a $20,000 humanoid robot from the startup 1X, is scheduled for delivery to households this year, but the company’s founder, Bernt Øivind Børnich, recently indicated that he’s not committed to any specific level of autonomy. If a robot encounters difficulties or if a customer requests it to perform a challenging task, a tele-operator from the company’s headquarters in Palo Alto, California, will take control, using its cameras to iron clothes or empty the dishwasher.

This is not inherently negative—1X obtains customer permission before entering tele-operation mode—but privacy as we understand it will vanish in a world where tele-operators are executing household chores via a robot. And if home humanoids are not genuinely autonomous, this setup is better interpreted as a means of wage arbitrage re-establishing gig work dynamics while, for the first time, allowing physical tasks to be carried out where labor costs are minimized.

We’ve traversed similar pathways before. Conducting “AI-driven” content moderation on social media platforms or assembling training data for AI enterprises often compels workers in low-wage regions to engage with distressing content. And despite assertions that AI will soon train itself and learn independently, even the finest models need a significant amount of human input to function as intended.

These human workforces do indicate that AI is not merely smoke and mirrors. Yet when they remain out of sight, the public continually overestimates the actual abilities of machines.

This benefits investors and hype, but it has repercussions for everyone. For instance, when Tesla marketed its driver-assistance system as “Autopilot,” it raised public expectations regarding what the system could safely achieve—a distortion that a Miami jury recently determined contributed to a fatal accident involving a 22-year-old woman (Tesla was ordered to pay $240 million in damages).

The same will apply to humanoid robots. If Huang’s prediction holds true, and physical AI is poised to impact our workplaces, homes, and public spaces, the manner in which we describe and evaluate such technology is crucial. However, robotics companies remain as opaque about training and tele-operation as AI firms are regarding their training data. If this status quo persists, we risk confusing hidden human labor for machine intelligence—and perceiving far greater autonomy than actually exists.